Why Agile Estimation Is Theater: The Case Against Story Points

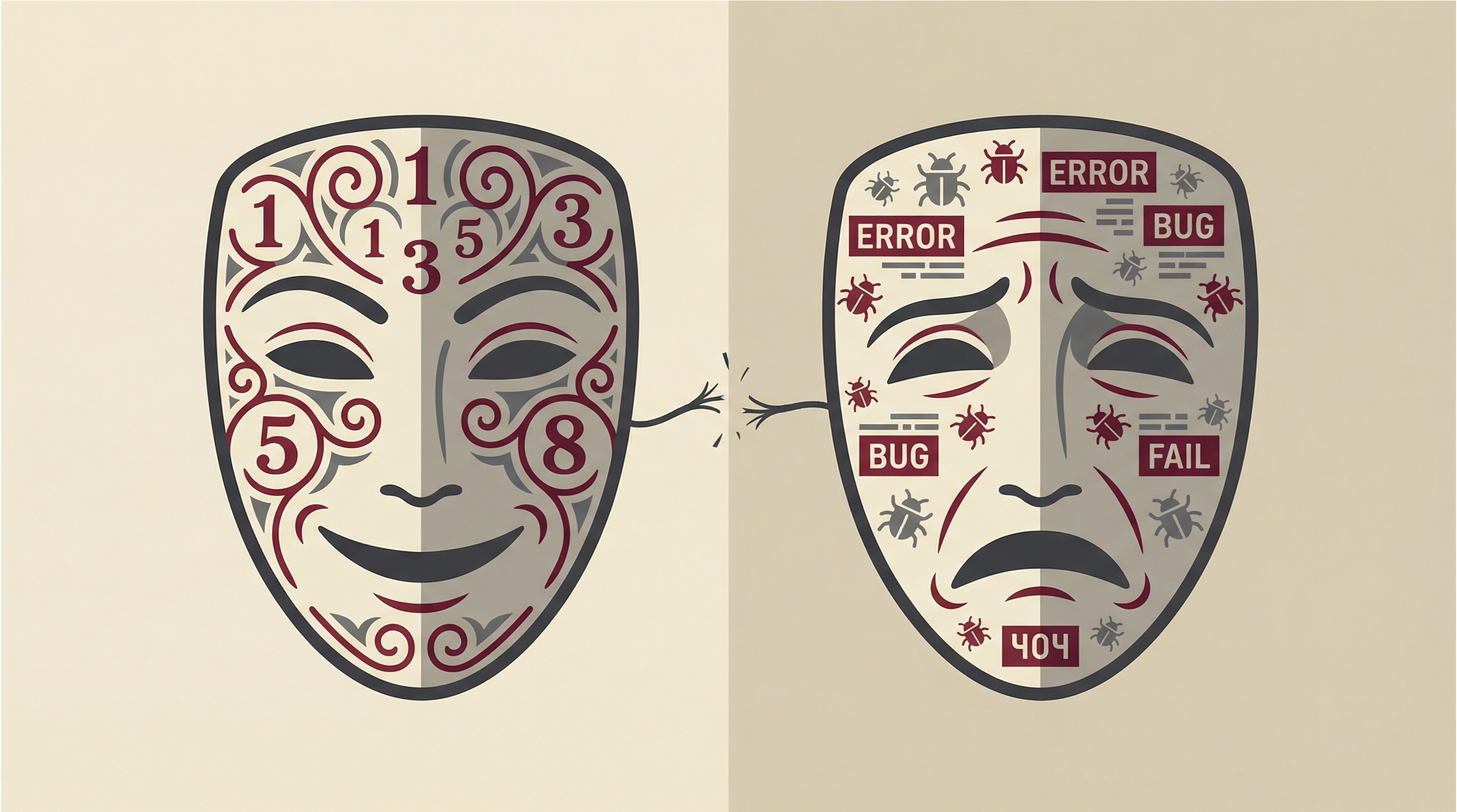

Every Wednesday at 2 PM, twelve developers file into a conference room. For the next ninety minutes, they'll hold up cards with Fibonacci numbers, debate whether a feature is a 5 or an 8, and pretend they can predict how long work will take in a system that changes daily.

This is your modern Agile estimation ceremony. It's theater. And everyone knows it.

The dirty secret: all those story points, all that calibration training, all those hours in planning poker—none of it makes your estimates more accurate. You've built an entire industry around the delusion that you can predict software development with meaningful precision.

You can't. Stop pretending.

The Estimation Cult: How Agile Turned Guessing into Pseudoscience

Somewhere along the way, Agile transformed an honest acknowledgment of uncertainty into a religion of false precision.

The original manifesto said "responding to change over following a plan." You ended up with teams spending 15-20% of their sprint time estimating and re-estimating work, manufacturing certainty where none exists.

Story points were supposed to free you from the tyranny of time estimates. Instead, you created something worse: a pseudoscientific measurement system that pretends to be objective while remaining entirely subjective.

Here's what actually happens in your estimation sessions:

Everyone is guessing. Your senior engineer with ten years of experience? Educated guess. Your junior developer? Wild guess. Your tech lead? Political guess designed to protect the team from unreasonable deadlines.

None of them know how long the work will actually take. They can't. Software development is fundamentally a discovery process. You don't know what you're building until you build it. You don't know what problems you'll encounter until you encounter them. You don't know what the requirements actually mean until you try to implement them.

But Agile doesn't want to hear this. Agile wants you to calibrate.

Calibration Training: The Latest Snake Oil for Software Teams

The estimation industrial complex has an answer when your estimates prove wildly inaccurate: you need better calibration.

Calibration training promises to transform your team into an estimation machine. You'll learn to "align on complexity." You'll practice estimating with reference stories. You'll conduct retrospectives on estimate accuracy and adjust your baseline.

It's sophisticated-sounding bullshit.

Real developers struggling with calibration reveal the truth: even when teams track their velocity religiously, even when they conduct elaborate calibration exercises, estimates remain wildly inaccurate.

One developer describes the Sisyphean task: "I've been trying to calibrate my estimations but I always end up way off." Another admits their team's velocity swings wildly despite consistent estimation practices. A third notes that no matter how much they refine their process, actual completion times bear little relationship to estimated points.

This isn't a failure of discipline. It's not because developers are lazy or inexperienced.

It's because accurate software estimation is mathematically impossible for complex work.

You're estimating work in a system with incomplete information about requirements, unknown technical dependencies that reveal themselves during implementation, constantly changing codebases where last sprint's architecture is obsolete, integration points with third-party systems that fail in novel ways, human communication failures that cause rework, and emergent complexity that only appears when components interact.

No amount of calibration training fixes fundamental uncertainty.

Yet teams persist because it gives management the illusion of control. Executives want to know when features will ship. Product managers want to plan roadmaps. So you pretend story points give you predictive power.

They don't. They just give you plausible deniability when you're wrong.

The Hidden Costs of Estimation Ceremonies

Let's talk about what estimation theater actually costs.

Time Hemorrhaging

A typical two-week sprint for an eight-person team:

- 90-minute backlog refinement

- 2-hour sprint planning with estimation

- 30 minutes re-estimating carry-over work

- 15 minutes per story for clarification that reveals estimation was wrong

That's 4-6 hours per sprint per developer.

For an eight-person team over a year: 1,664 to 2,496 hours—the equivalent of one full-time engineer doing nothing but estimation work.

And that's conservative. Many teams do multiple refinement sessions. Many re-estimate work multiple times as requirements change.

The Confidence Game

Your product manager asks if the team can commit to shipping Feature X by end of quarter. Your engineering manager looks at the story point estimates. The math says yes—barely.

Everyone in the room knows the estimate is optimistic. Everyone knows requirements will change. Everyone knows there will be unexpected technical debt.

But the math says yes, so management commits.

Now you're locked into a deadline based on guesses pretending to be data.

When the deadline approaches and you're not done, management asks why. You explain the unforeseen complexity. They point to your estimates and suggest you're not executing well. After all, you estimated this work. You committed to these points.

Estimates create a false objectivity that turns every delay into an engineering failure rather than an acknowledgment of irreducible uncertainty.

The Gaming Begins

Once your team realizes estimates become commitments, the gaming starts.

Developers pad estimates to create buffer. Management pressures for lower estimates to fit more work in the roadmap. Mid-level managers massage the numbers to make their commitments look achievable.

Planning poker becomes a negotiation, not an engineering assessment. The question isn't "how complex is this work?" It's "what number satisfies everyone's political needs?"

You've transformed technical planning into theater where everyone performs confidence they don't feel.

Opportunity Cost

Every hour spent estimating is an hour not spent writing code, fixing bugs, improving architecture, automating painful processes, or talking to users about what they actually need.

For senior engineers especially, estimation ceremonies are often the lowest-value use of their time. Your most expensive, most experienced resources spend hours debating whether something is 13 points or 20 points.

The actual difference between 13 and 20 is meaningless. Both are wrong. But you've spent 30 minutes of collective senior engineering time debating the distinction.

Why Overconfident Estimates Are Actually Beneficial

Get the weekly breakdown

What the Agile industry sells vs what actually works. Data, war stories, no certification required. Free, unsubscribe anytime.

Here's where this gets truly contrarian: if you must estimate, deliberately overconfident estimates might be better than "accurate" ones.

Sound crazy? Consider the alternative.

When teams give realistic estimates—including padding for uncertainty, technical debt, integration problems, and requirement changes—they give numbers management finds unacceptable.

"This feature will take 6-8 sprints" gets rejected. Product says the business can't wait that long. Management asks what you can cut to make it fit in 3 sprints. You end up negotiating a middle ground of 4 sprints that satisfies nobody and will prove wrong anyway.

Now consider the overconfident estimate.

You say 2 sprints. Everyone is happy. You start work.

After one sprint, it's obvious this will take longer. You have a conversation about why.

Here's the key: that conversation happens when you have real information.

You're not debating theoretical complexity in a vacuum. You're discussing actual code, actual problems, actual trade-offs. The conversation is grounded in reality, not guesses.

"We've implemented the core logic, but discovered the third-party API doesn't support the workflow we designed. We need to either build a workaround or change the feature. Here are the options."

This is infinitely more valuable than spending hours up-front trying to predict you'd hit this problem.

Overconfident estimates get you started faster. They defer detailed planning until you have information to plan with. They force product and engineering to collaborate on real trade-offs, not theoretical ones.

Yes, you'll miss the initial estimate. But you were going to miss the "realistic" estimate too. At least this way you didn't waste time pretending otherwise.

Embracing Uncertainty: Working Without Estimates

The grown-up response to estimation failure isn't better estimation. It's acknowledging that for complex knowledge work, estimates provide false precision, and you should structure your process differently.

Here's what that actually looks like.

Step 1: Slice Work Into Deployable Increments

Stop trying to estimate large features. Break work into the smallest deployable piece that delivers any value.

Not: "Rebuild the notification system - 40 points"

Instead:

- "Send email when user's post gets first comment - ship it"

- "Add email preference toggle - ship it"

- "Support digest mode instead of immediate - ship it"

- "Add in-app notification badge - ship it"

Each piece ships to production. Each piece delivers value. Each piece takes days, not weeks.

You'll never estimate any individual item perfectly. But it doesn't matter because you're shipping continuously. Stakeholders see progress in working software, not theoretical velocity.

Step 2: Establish Clear Priority Stack

Without estimates, you can't promise delivery dates. You need a different contract with stakeholders:

"We will always work on the most important thing next."

Maintain a strict priority stack. Top item is what's being worked on now. Second item is next. Everything else is ordered but not committed.

When stakeholders ask "when will Feature X ship?" the answer is: "We're currently working on items 1 and 2. Feature X is item 7. Based on our historical throughput, probably 3-4 weeks, but if you want it sooner, tell us what to deprioritize."

This forces honest conversations about trade-offs instead of fictional negotiations about estimates.

Step 3: Track Flow Metrics, Not Velocity

Stop measuring story points delivered. Start measuring:

- Cycle time: How long from starting work to production?

- Throughput: How many items completed per week?

- Work in progress: How many items in flight simultaneously?

These metrics are actual data, not subjective points. They tell you if you're getting faster or slower. They highlight bottlenecks. They give stakeholders realistic expectations based on past performance.

"We've completed 12 items in the last 3 weeks, average cycle time 3.5 days. Your feature breaks into 8 items. Probably ships in 2-3 weeks if priorities don't change."

No story points. No estimation ceremony. Just data and probability.

Step 4: Budget Time, Not Scope

For larger initiatives, flip the question.

Instead of "how long will this feature take?" ask "what can we deliver in 4 weeks?"

Time-box the work. Define the problem you're solving and success metrics. Then work iteratively, shipping progressively better solutions until the time box expires.

After 4 weeks, you'll have something in production. It might not be the original vision, but it will be real software that users can interact with. You can then decide: is this sufficient, or is it worth another time box?

This eliminates the death march toward a deadline based on fictional estimates. You're always in control. You can always ship what you have.

Step 5: Default to Continuous Deployment

The real antidote to estimation is reducing batch size to nearly zero.

When you deploy multiple times per day, when features ship incrementally behind flags, when rollback is trivial—deadlines lose their urgency.

"Will this be done by Friday?" becomes meaningless when partial versions ship Tuesday and Thursday and you'll iterate based on user feedback.

Continuous deployment doesn't eliminate the need for prioritization. But it eliminates the estimation theater around coordination and releases.

The Practical Transition Checklist

You can't typically abolish estimation overnight. Here's how to wean your team off the points addiction:

Month 1: Start Tracking Real Metrics

- Set up cycle time tracking (Jira, Linear, or GitHub issues all support this)

- Count items completed per sprint (regardless of points)

- Measure estimation accuracy: compare estimated vs actual cycle time

- Share data showing how inaccurate estimates actually are

Month 2: Reduce Estimation Granularity

- Move to t-shirt sizes (S/M/L) instead of Fibonacci points

- Set a 15-minute timer for estimation sessions—when it expires, move on

- Stop re-estimating carry-over work

- Track how much time you save

Month 3: Experiment With No-Estimate Sprints

- Pick one sprint to run without estimates

- Focus purely on priority order and cycle time

- Compare throughput to previous sprints

- Gather team feedback on time saved

Month 4: Shift Stakeholder Conversations

- Present roadmap as priority-ordered list, not timeline

- Answer "when" questions with historical throughput data

- Offer time-boxed experiments instead of estimated projects

- Practice saying "we'll work on the most important thing next"

Month 5-6: Institutionalize Flow

- Replace velocity charts with cycle time and throughput

- Make continuous deployment the norm

- Celebrate shipped increments, not completed points

- Retrospect on quality of technical conversations without estimation theater

The goal isn't to eliminate all planning. It's to eliminate the pseudoscience pretending that planning poker gives you predictive power.

The Uncomfortable Truth

Management wants certainty. Estimation theater gives them the illusion without the substance.

When you abandon estimates, you're forced to have harder, more honest conversations:

"We can't promise this will ship by June. We can promise we'll work on nothing else until it ships, and we'll deploy increments weekly so you see progress."

"We can't tell you if this is possible in one quarter. We can build a prototype in 2 weeks and then tell you what's actually involved."

"We can't commit to all five features. We can commit to working your priority order and shipping the most important things first."

These conversations feel uncomfortable because they expose the irreducible uncertainty in software development.

But they're honest. And they're more respectful of everyone's time than pretending story points solve the prediction problem.

Start Small, Start Now

You don't need permission to stop wasting time in estimation ceremonies.

Start with your team. Propose an experiment: one sprint without estimates. Just priority order and cycle time.

Track what happens. Measure throughput. Measure time saved. Measure whether stakeholders actually notice the absence of story points.

My prediction: throughput stays the same or improves. Stakeholder satisfaction stays the same or improves. Developer satisfaction definitely improves.

Because you've eliminated theater and replaced it with actual work.

The Agile industrial complex won't like this. Too many consultants selling estimation training. Too many tools charging for story point tracking. Too many managers whose value proposition is "I keep the team accountable to their estimates."

But you're not optimizing for the Agile industrial complex. You're optimizing for shipping software.

Shipping software doesn't require predicting the future. It requires working on the most important thing next, deploying frequently, and being honest about uncertainty.

Stop pointing stories. Start shipping software.

I've spent twenty five years watching engineers try to fix broken processes. The ones who succeeded had data, allies, and patience. The ones who failed had opinions, frustration, and a retro slot.

Most of them were trapped in what I call Risk Management Theater and didn't even know

Is your organization performing control or practicing it?

The RMT Diagnostic is a 1-page PDF with 10 signs your team is trapped in Risk Management Theater. Takes 60 seconds. No certification required.

No spam. No "Agile tips." Just the diagnostic. Unsubscribe anytime.